A non-negative scalar attached to each training example, measuring how far that example slips inside or across the SVM margin envelope. Slack variables convert the hard “everyone outside the margin” constraint into a budgeted penalty — and turn an infeasible separation problem into a tractable one.

The Constraint Modification

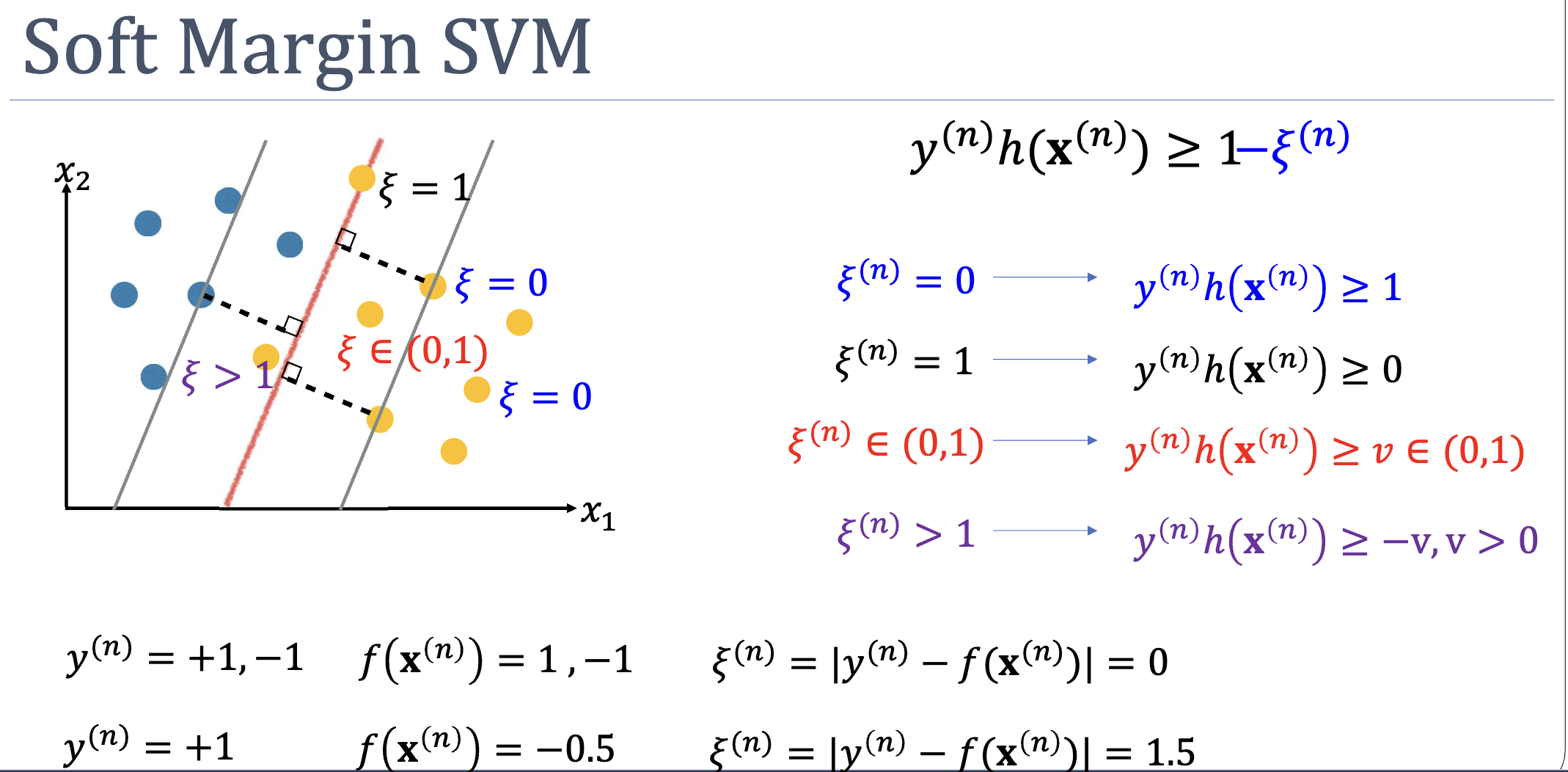

The hard-margin SVM requires every example to satisfy:

If the data is not linearly separable (in -space), this constraint set is empty and the optimisation has no feasible point. Slack relaxes the right-hand side by a non-negative amount:

Each is a per-example quota for “how much we’re willing to bend the margin rule for this point.”

Geometric Interpretation

The value of encodes the position of point relative to the margin and decision boundary:

| Geometric meaning | |

|---|---|

| Correctly classified, on or outside the margin (constraint holds) | |

| Correctly classified, but inside the margin envelope | |

| Sits exactly on the decision boundary () | |

| Misclassified — on the wrong side of the decision boundary |

A useful identity: — the hinge loss of example . If the example already satisfies the margin, ; otherwise, measures the shortfall.

Where Slack Enters the Objective

The slack-augmented soft-margin SVM objective is:

The sum is the total margin violation across the training set, weighted by the hyperparameter . Note: we minimise the sum of values, not the count of non-zero slacks. A misclassified example with costs more than two margin-grazing examples with each.

Slack on every example, even those that don't need it

Every training point gets its own in the formulation — including ones that are happily outside the margin. At the optimum those have , but they are still variables in the problem. The slack is never added selectively to violators; it is allocated to all and pinned to zero where the data permits.

Why Slack Always Hits the Constraint With Equality

At the optimum, whenever for any , the margin constraint is satisfied with equality:

This follows from KKT complementary slackness — see soft-margin-svm for the derivation. The practical upshot: is not just a budget upper bound on the violation, it equals the violation exactly.

Why Slack Doesn’t Run Away

If slack carried no penalty, the optimiser would dial every to infinity, making all constraints trivially satisfiable and shrinking to zero. The hyperparameter prevents this:

- Large : each unit of slack is expensive → the optimiser tolerates fewer/smaller margin violations → narrow margin, may overfit

- Small : slack is cheap → many points allowed inside or across the margin → wide margin, may underfit

See soft-margin-svm for the full -tradeoff discussion.

Active-Recall Questions

What does tell you about example ?

The example is correctly classified but lies inside the margin envelope. The margin constraint is satisfied with equality at , so the point sits 40% of the way from the margin towards the decision boundary, on the correct side.

A misclassified example always has larger slack than a correctly-classified one inside the margin. True or false?

True. Misclassified means , which forces . Correctly-classified-but-inside-margin means , which gives . So misclassified inside-margin .

However, between two misclassified examples, the one deeper into the wrong territory (more negative ) has the larger slack. A barely-misclassified point can have only slightly above 1.

Why minimise rather than ?

The count is non-convex and combinatorial — it would make the problem NP-hard. The sum is a convex relaxation: it preserves the ranking (more violation → worse objective) while keeping the QP tractable. As a side effect, the sum penalises severity not just occurrence: one badly-misclassified point hurts more than several margin-grazing ones.

Connections

- Component of soft-margin-svm — slack variables are the structural mechanism that makes SVM work on non-separable data.

- Hinge loss — is the per-example hinge loss; soft-margin SVM is equivalent to minimising mean hinge loss + L2 regularisation.

- Why slack ≠ misclassification indicator — examples with are correctly classified; only implies misclassification. The “slack count” overcounts errors.