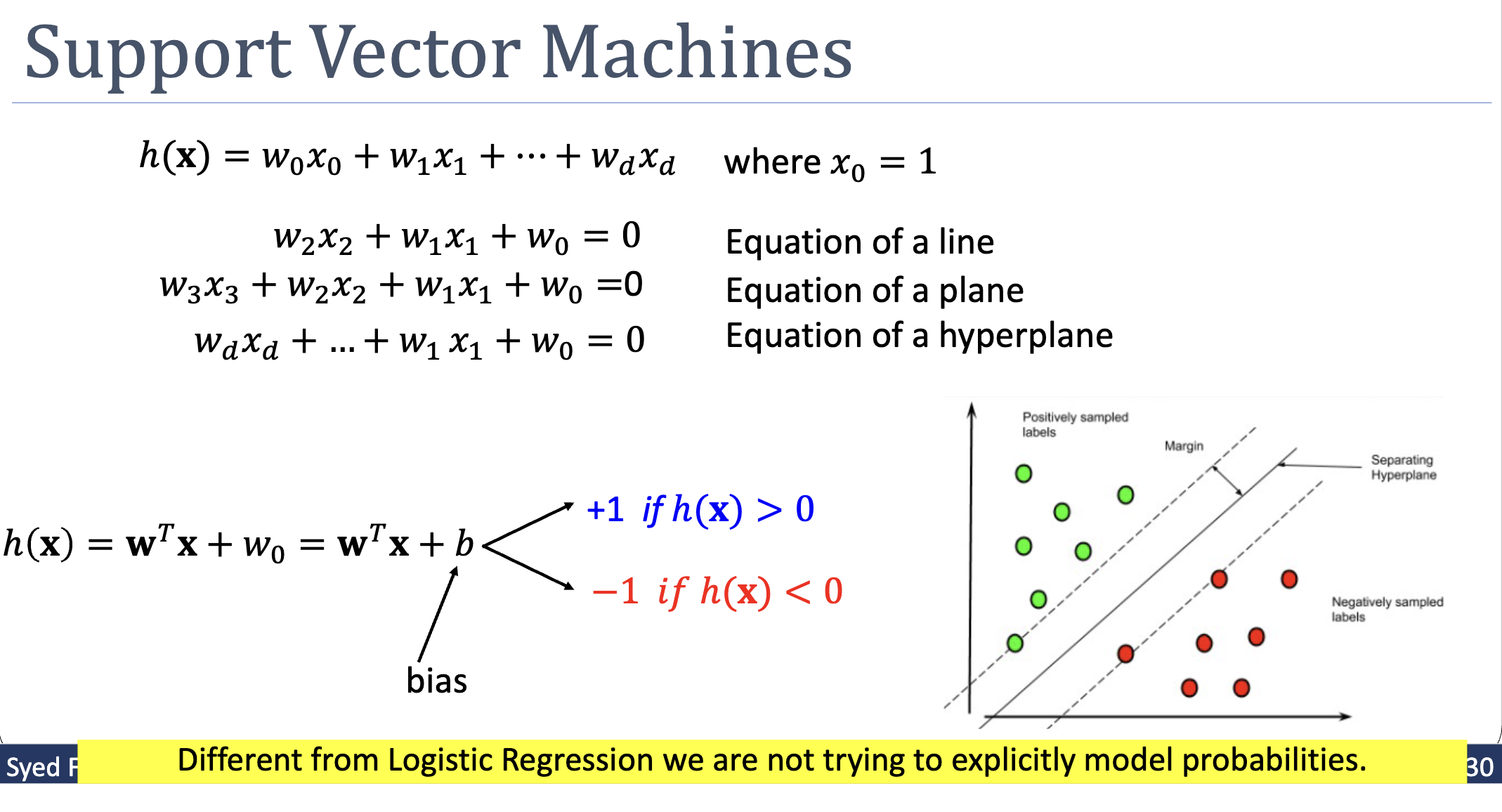

A linear classifier that, among all hyperplanes correctly separating the training data, picks the one that sits as far as possible from the closest training point. Maximising this perpendicular distance — the margin — turns out to be a convex quadratic program with a unique global optimum.

The Idea in One Picture

Linearly separable data admits infinitely many separating hyperplanes. Some pass close to a training point on one side; some pass through the wide gap in the middle. The latter are intuitively safer: small perturbations to a point are less likely to push it across the boundary. SVMs formalise “safer” as the margin — the perpendicular distance from the boundary to the closest training point — and pick the hyperplane that maximises it.

The training points that touch the resulting margin envelope are called support vectors. They are the only points that affect the boundary; every other training point sits comfortably on its side and could be removed without changing the answer.

The Hypothesis Set

with prediction:

SVM uses labels rather than — a convention that makes the constraints cleaner. The product is positive exactly when the prediction agrees with the label.

Unlike logistic-regression, SVM is not a probabilistic classifier — it doesn’t try to model . It just predicts which side of the hyperplane a point lies on.

Deriving the Optimisation

Step 1 — Express the margin. The perpendicular distance from to the hyperplane is . The margin is the minimum over training points:

Step 2 — Maximise the margin, subject to correctness. All training examples must be correctly classified — i.e., — and among those classifiers we want the one with the largest margin:

The absolute value drops because, under the correctness constraint, .

Step 3 — Canonical rescaling. The hyperplane is unchanged if we multiply by any positive scalar . Use that freedom to fix the scale so that the closest training point satisfies . Under this rescaling:

- The constraint becomes for all , with equality for at least one (the closest) point.

- The inner in the objective becomes .

- The objective collapses to maximising .

Step 4 — Convert max to min. Maximising is equivalent to minimising , which is equivalent to minimising . The half is conventional — it makes the gradient rather than . The squared norm is preferred because it’s smooth, strictly convex, and quadratic (which lets us use QP solvers).

The SVM Primal Problem

This is a quadratic program: convex quadratic objective with linear inequality constraints. Properties:

- Convex. Unique global optimum; any descent method reaches it.

- Quadratic. Off-the-shelf QP solvers handle it efficiently.

- Sparse solution. At the optimum only a few constraints are active (binding with equality) — these correspond to the support vectors.

Why the Two Constraint Forms Are Equivalent

The optimal SVM solution has for some training points (the support vectors) and for the rest. So the constraints "" and "" describe the same optimum:

- The form is looser — it permits .

- The form is stricter — it forces the closest point to be exactly on the margin.

Because we’re minimising , we want to be as small as possible — i.e., the margin to be as large as possible. The smallest that satisfies everywhere is the one that achieves at the binding constraint. The two formulations have the same optimum.

Support Vectors

At the optimum, each training point falls into one of three cases:

| Condition | Meaning |

|---|---|

| On the margin — support vector | |

| Strictly beyond margin — does not affect the boundary | |

| Misclassified — impossible in hard-margin SVM (constraint violated) |

The third case can’t occur because the constraints rule it out. (For non-separable data, soft-margin SVMs introduce slack variables that allow misclassifications at a cost — covered later.)

The boundary depends only on the support vectors. Delete any non-support-vector point and re-train: the same hyperplane comes out. This is the structural reason SVMs are described as “data efficient” — only a handful of training points actually matter for the decision.

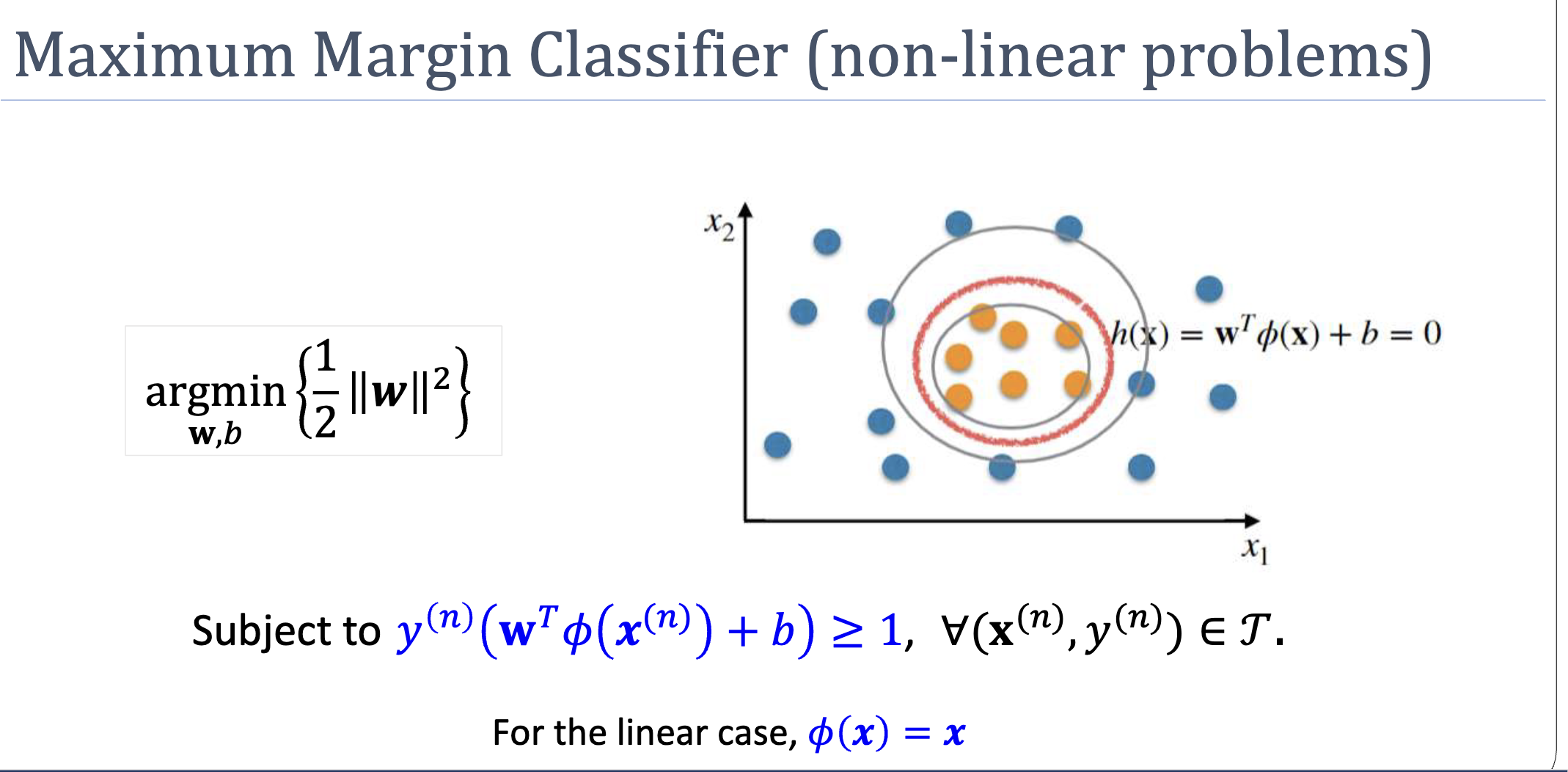

Combining With Basis Expansion

Nothing in the derivation requires that the data be linearly separable in the original space. Plug in a basis expansion and you get a non-linear SVM:

The boundary is a hyperplane in -space, but a curved (polynomial, circular, etc.) surface in the original space. With the SVM can carve out elliptical decision boundaries — perfect for the canonical “concentric rings” example.

The catch: may be very high-dimensional, making the QP large. The fix — using kernel functions to evaluate without ever forming — is the kernel trick, covered in week 4 via the dual formulation (see below).

The Dual Representation

The primal QP has unknowns (). Apply Lagrange duality: the constraints each get a multiplier , and minimax-swap to maxmin. Setting and gives:

Substituting back eliminates and , leaving the dual:

subject to and .

Three structural consequences:

- The dual has unknowns, not . When — high-degree polynomial expansion or Gaussian kernel — the dual is dramatically smaller.

- Data appears only through inner products . Replace each with a kernel function evaluated in the original space — that’s the kernel-trick.

- Sparsity from complementary slackness. KKT’s forces for every non-support-vector. The dual sum collapses to a sum over support vectors only.

Predictions in dual form:

where is the support-vector index set, and is computed by averaging over support vectors:

SVM vs Logistic Regression

| Logistic regression | SVM | |

|---|---|---|

| Output | Calibrated probability | Class label (no probability) |

| Loss | Cross-entropy (smooth, all examples contribute) | Hinge / margin-based (only support vectors contribute) |

| Decision boundary | Any separating hyperplane the optimiser converges to | Maximum-margin hyperplane (unique) |

| Convex? | Yes | Yes |

| Handles non-separable? | Yes (still converges, weights blow up if perfectly separable) | Hard-margin SVM fails; soft-margin SVM works |

| Labels | ||

| Support for ? | Yes (basis expansion) | Yes (and kernel trick in dual form) |

For probability calibration, choose logistic regression. For maximising margin and exploiting the kernel trick, choose SVM. Both are linear-in- models, both are convex, and both extend non-linearly via .

Related

- margin — the geometric quantity SVM maximises

- quadratic-programming — the optimisation form SVM reduces to

- non-linear-transformation — combine with SVM for non-linear boundaries

- logistic-regression — alternative linear classifier with a probabilistic output

- decision boundary — the hyperplane SVM produces

- lagrangian — duality is what produces the dual SVM and enables kernels

- kkt-conditions — complementary slackness is the structural reason SVMs are sparse

- kernel-trick — replace inner products in the dual with a kernel function

Active Recall

Why does SVM use labels rather than ? Walk through the role of the product .

The convention makes the correct-classification check uniform: is true exactly when the predicted side matches the true label. With , is needed; with , is needed; the product handles both cases in one expression. The same trick lets us drop the absolute value from the margin formula under the correctness constraint, simplifying the algebra significantly.

Why does maximising the margin reduce to minimising — surely larger weights should mean a larger margin?

The intuition reverses once you account for canonical rescaling. After rescaling so that the closest training point has , the perpendicular distance from that point to the boundary is . To make the margin large, must be small. Geometrically, controls how rapidly changes as you move away from the boundary; a steep reaches the value close to the boundary (small margin), while a gentle reaches far away (large margin). The squared norm is used in the objective because it’s smooth and yields a clean QP.

A hard-margin SVM has been trained. Suppose you receive 5 new training examples, all of which sit safely outside the margin envelope on the correct side. How does the decision boundary change?

Not at all. None of the new points become support vectors (they don’t touch the margin envelope), so the binding constraints of the QP are unchanged. The optimal is unchanged. This is the same property that made non-support-vectors disposable in the original training set: the boundary is determined entirely by the points sitting on the margin’s edge, regardless of how many “easy” points exist around them. (If a new point landed inside the margin or on the wrong side, the answer would be very different — for hard-margin SVM, no solution exists; for soft-margin SVM, the hyperplane shifts to accommodate it at a cost.)