THE CRUX: Week 4 gave us a kernelised SVM dual that is mathematically clean — but it assumes the data is linearly separable in -space, and even when it is, the -variable QP is too expensive to solve directly. Two open ends. How do we (a) handle data that can't be separated cleanly, and (b) actually compute the optimum at scale?

The fix is a pair of complementary changes. (a) Introduce a slack variable for each training example, relaxing the constraint to , and add to the objective. The hyperparameter trades margin width against violation tolerance. The dual is identical to last week’s except for one detail: is now bounded above by — the box constraint. (b) Solve the QP via Sequential Minimal Optimization (SMO): pick a pair of multipliers , solve the two-variable subproblem analytically, clip to feasibility, repeat. Two variables is the minimum required because the equality constraint pins any single multiplier in place.

Last week ended with the kernelised dual:

Two unanswered questions hang over it:

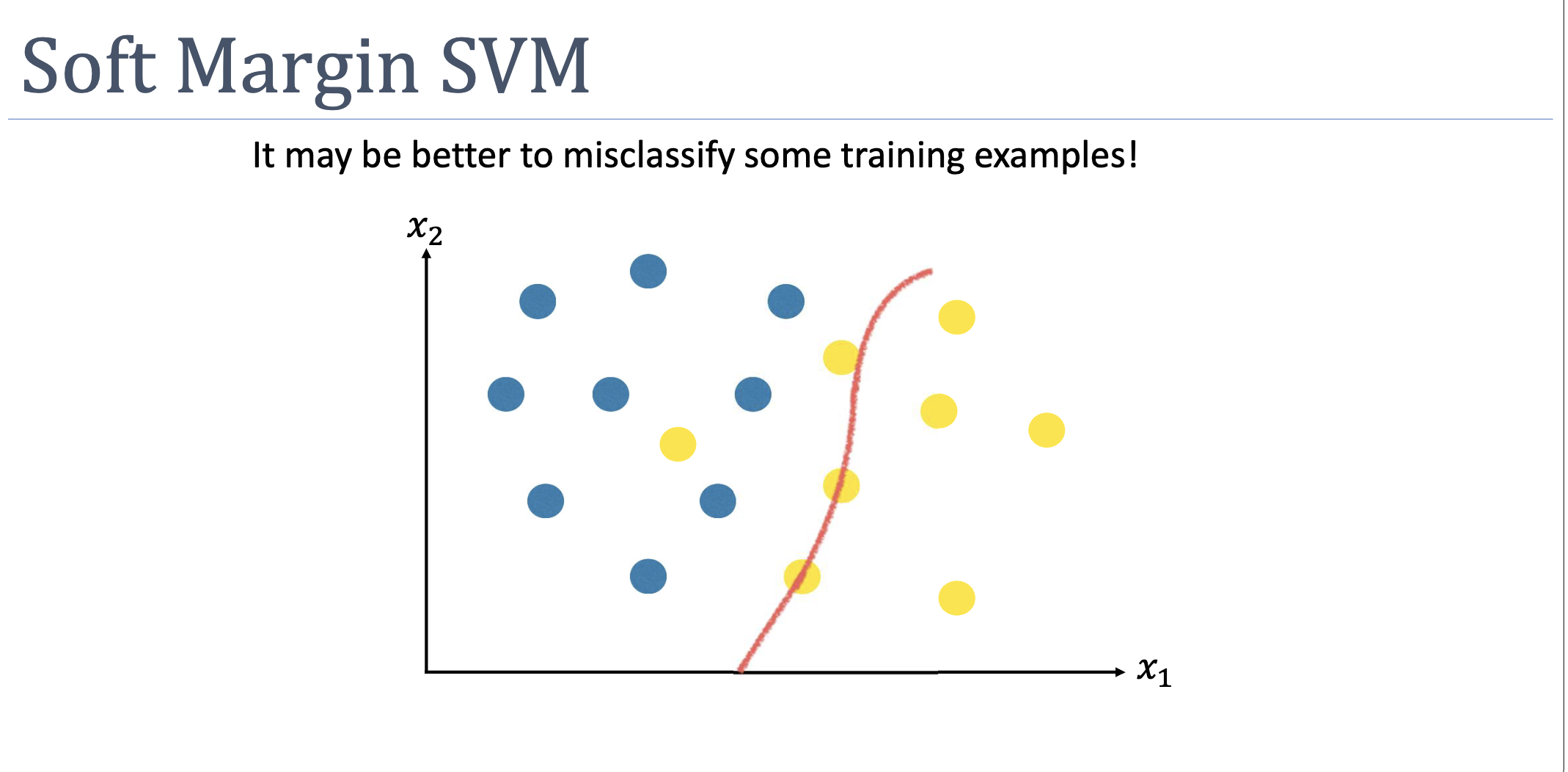

- What if no separating hyperplane exists? With a Gaussian kernel, -space is infinite-dimensional, so some separator almost always exists — but it may be over-specific to the training data. With a linear kernel and overlapping classes, no separator exists at all. Either way: hard-margin SVM is brittle.

- How do we actually solve this? variables, with a Gram matrix that’s , dense, and stored in memory. Off-the-shelf QP runs out of room past a few thousand examples.

Week 5 closes both. Part 1 (Monday) introduces slack and the soft-margin dual. Part 2 (Tuesday) introduces SMO.

Part 1: Soft-Margin SVM

From Hard to Soft

The hard-margin constraint says “every point is at least one margin-unit on the correct side”:

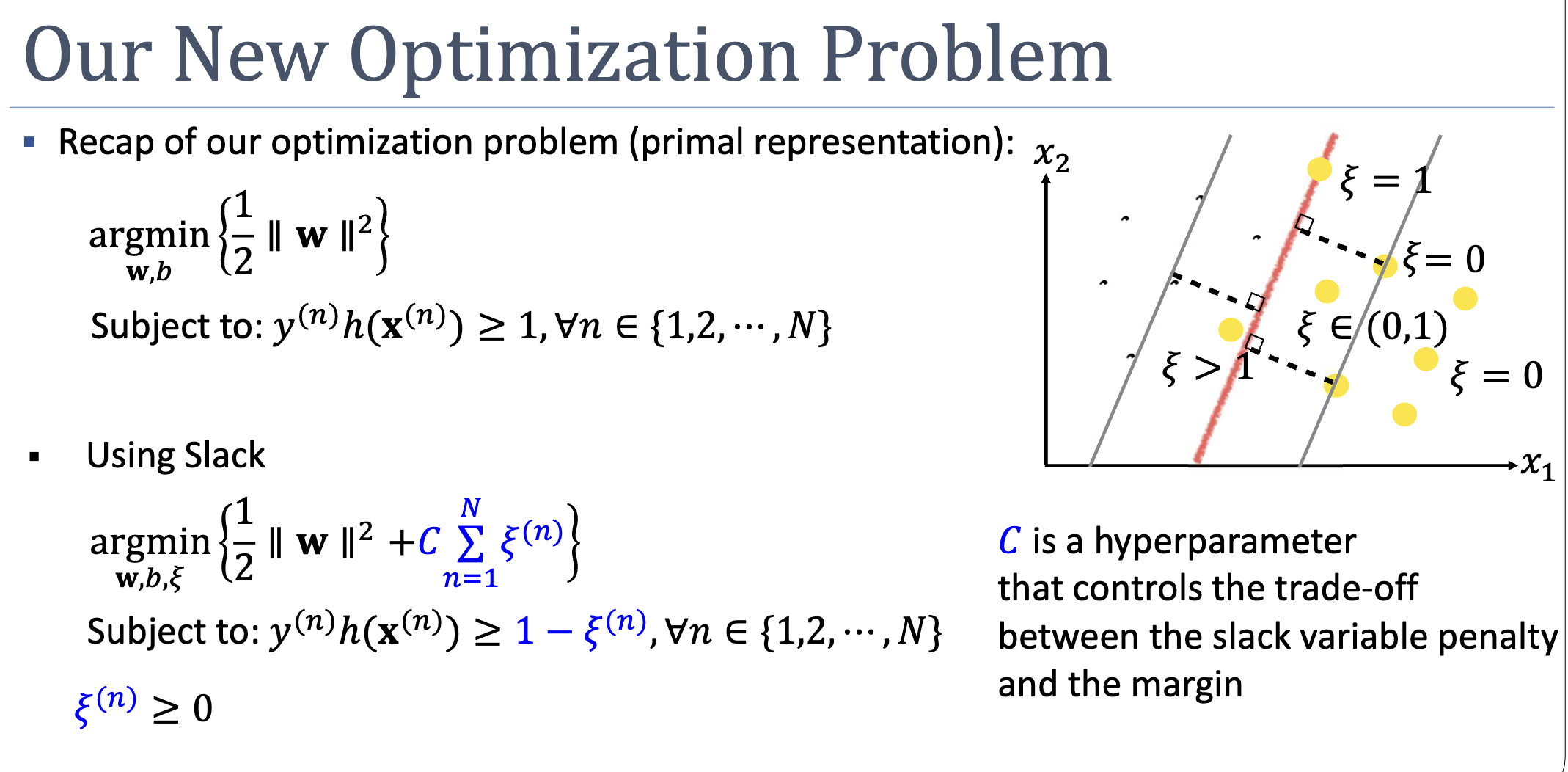

If even one point violates this, the constraint set is empty. The relaxation: each point gets a personal violation budget :

Now the constraint is always satisfiable — set large enough and any classification works. To stop the optimiser from making slack infinite, penalise it in the objective:

The hyperparameter sets the slack price. Large → expensive slack → narrow margin, behaves like hard-margin. Small → cheap slack → wide margin, many margin-violators tolerated.

Reading Geometrically

The value of at the optimum encodes where point sits:

| Position | |

|---|---|

| Outside or on the margin envelope (constraint slack) | |

| Inside the margin envelope, correctly classified | |

| Exactly on the decision boundary | |

| Misclassified — wrong side of the boundary |

A clean identity: . This is exactly the hinge loss — soft-margin SVM is L2-regularised hinge-loss minimisation.

Slack is a value, not a count

The objective penalises — the total magnitude of violation, not the number of violators. One badly-misclassified point () costs more than three margin-grazers ( each). The formulation cares about depth, not breadth.

The Margin Definition Changes

In hard-margin SVM, the margin was defined as the distance from the boundary to the closest training point (canonically rescaled to ). With soft margin, “closest training point” is no longer well-defined — some points lie inside the margin. The margin is now simply as a definitional choice, decoupled from any particular training point.

The Dual: Almost Unchanged

Apply Lagrangian relaxation as before. We now have two sets of multipliers — for the margin constraint and for :

Setting partials of to zero gives:

- (same as hard-margin)

- (same)

- New: , i.e.,

The third relation, combined with and , gives the box constraint:

Substituting back, and vanish entirely. The dual is identical to the hard-margin one, with one upper-bound difference:

The kernel trick still applies. Predictions still use . The entire effect of slack collapses, in dual form, to the single change .

Three Categories of Support Vector

KKT complementary slackness gives two equations:

These split training points into three regimes:

| Type | Position | ||

|---|---|---|---|

| Not a support vector | Strictly outside the margin | ||

| Margin support vector | Exactly on the margin: | ||

| Bound support vector | Anywhere from on-margin to misclassified |

When , then , which forces via the second condition; combined with this pins the point exactly on the margin via the first. When , the second condition is automatically satisfied for any , so the point can sit anywhere — including misclassified.

This is why only margin support vectors are used to compute :

Bound SVs () have in general, so substituting them gives the wrong .

Sparsity, Weakened But Preserved

In hard-margin SVM, only on-margin points have — typically a tiny fraction. In soft-margin SVM, every margin violator also has , expanding the support set. But examples comfortably outside the margin still have and contribute nothing to predictions. Sparsity is weaker but not lost.

Part 2: Sequential Minimal Optimization

The dual is a convex QP with variables, an Gram matrix, box constraints, and a linear equality. Off-the-shelf QP solvers run in time and store the full Gram matrix in memory. For that’s already 80GB and trillions of operations. We need a method that exploits the structure.

The Decomposition Idea

Instead of solving for all multipliers at once, fix most of them and optimise over a small subset. With two at a time, the subproblem has a closed-form analytic solution.

Why two and not one? The equality constraint couples the multipliers. Picking just to update with everything else fixed forces:

so is pinned. Two multipliers can move while preserving the constraint — a change in is absorbed by a compensating change in .

The SMO Loop

Initialise a^(n) = 0 for all n. # feasible: 0 ∈ [0,C], Σ a y = 0

Repeat until convergence:

Pick (i, j) heuristically.

Compute a^(j,new) analytically.

Clip a^(j,new) to [L, H] for box feasibility.

Recover a^(i,new) from the equality constraint.

Convergence is signalled when no example violates KKT within tolerance.

The Analytic Update

Substituting as a function of (via ) into the dual objective and differentiating w.r.t. :

where is the prediction error on example .

The denominator is the squared distance between the two examples in feature space. The numerator scales with the error disagreement. Far-apart examples with very different errors produce big steps.

Clipping

The candidate may exceed , or push (computed from it) outside the box. The feasible interval for depends on whether :

- Same labels (, line ):

- Different labels ():

Then clip:

and recover .

Picking the Pair: Heuristics

Convergence is guaranteed for any selection — but speed is hugely heuristic-dependent. Standard recipe:

For (the first): Alternate between (1) random selection among examples violating KKT within tolerance , and (2) random selection among margin SVs () violating KKT. Strategy 2 keeps the search on the active set; strategy 1 occasionally checks bound multipliers.

For (the second): Try in order until a positive improvement in is observed:

- Maximum step heuristic. Pick the that maximises — approximates the largest update size.

- Iterate over non-bound multipliers.

- Iterate over the entire training set.

- If still no improvement, replace and try again.

Errors are cached and updated incrementally.

KKT Conditions, Restated

In SMO terms, the optimum is characterised entirely by :

| Condition | Geometric meaning |

|---|---|

| Outside margin; not a SV | |

| On margin; margin SV | |

| On, inside, or violating margin; bound SV |

When every example satisfies its KKT condition within tolerance, the dual is at its optimum.

What Could Go Wrong

- too large. Acts as hard-margin in disguise. Inherits all the overfitting and brittleness problems we introduced soft margin to avoid.

- too small. Slack is essentially free. The decision boundary collapses — any classification satisfies the constraints with cheap-enough . The model underfits, possibly degenerating to “always predict the majority class.”

- No margin support vectors. If every SV is at , the formula for has no inputs. Pick any of them and accept the resulting .

- Numerical issues in SMO when . The denominator . Skip the pair.

- Lazy SMO heuristics. Random-random pair selection still converges, but may be 10×–100× slower than max-step. The heuristics matter.

Concepts Introduced This Week

- soft-margin-svm — SVM with slack-relaxed constraints; introduces hyperparameter ; dual differs from hard-margin only in the box constraint .

- slack-variables — per-example violation budgets ; equal hinge loss at the optimum; enable SVM on non-separable data.

- sequential-minimal-optimization — decomposition algorithm for the SVM dual; iteratively updates pairs of multipliers analytically; two is the minimum because of the equality constraint.

Connections

- Builds on week-04 — uses the same Lagrangian / KKT / kernel machinery; the dual has the same form except for the box constraint.

- Builds on support-vector-machine and kernel-trick — soft margin is a relaxation, not a replacement; combined with kernels (especially Gaussian) it is the default

sklearn.svm.SVC. - Builds on kkt-conditions — complementary slackness now gives a three-way support-vector classification (non-SV / margin-SV / bound-SV) instead of two-way.

- Sets up later weeks: regularisation framework ( is one example of the bias-variance trade-off knob); generalisation theory (margin-based bounds explain why widening the margin via small can improve test error); model selection (cross-validating , , kernel choice).

Open Questions

- How do we choose (and the kernel hyperparameters) in practice? Cross-validation, but on what scale and grid?

- Why does SMO converge — what guarantees the analytic two-variable updates strictly increase until KKT is satisfied? (Convexity of + careful pair selection; the formal proof is in Platt’s original paper.)

- For multi-class problems, how does soft-margin SVM extend? (One-vs-rest, one-vs-one, Crammer–Singer — covered in later weeks or as background reading.)