The negative log-likelihood for a probabilistic classifier — measures dissimilarity between the predicted class distribution and the true labels. For binary logistic regression, it is strictly convex in .

Definition

For binary classification with predicted probability and ground-truth label , the cross-entropy loss over a training set of size is:

The two terms are a switch driven by the binary label:

- If , only the first term survives: .

- If , only the second: .

In both cases, you’re penalised by the negative log of the probability the model assigns to the correct class.

Behaviour

The shape of for does most of the work:

| Predicted probability of correct class | Loss contribution |

|---|---|

The loss explodes as the model confidently predicts the wrong class — confident wrongness is punished disproportionately, which is exactly what a calibrated probabilistic classifier should optimise.

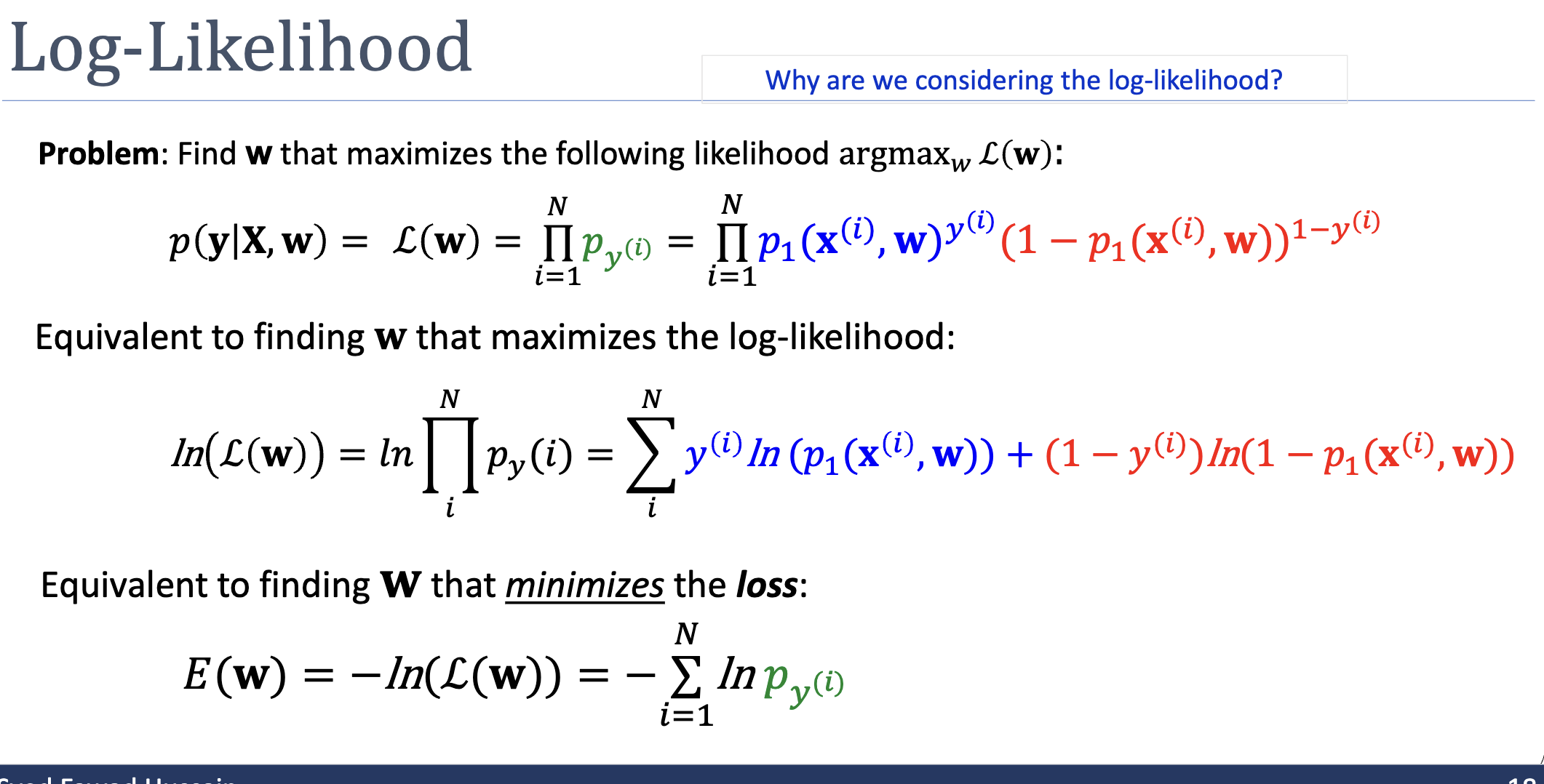

Connection to Maximum Likelihood

Cross-entropy isn’t an arbitrary choice. It is exactly the negative log-likelihood under MLE. Starting from the likelihood and applying :

Maximising likelihood ↔ minimising cross-entropy. Same optimum, different signs.

Why “Cross-Entropy”?

In information theory, the cross-entropy between two distributions (true) and (predicted) over a discrete outcome is:

For each training example, the true distribution is a one-hot — all probability mass on the actual label — and is the model’s predicted distribution. The per-example cross-entropy reduces to , and the loss is the empirical sum across examples. So the loss measures the average dissimilarity between the model’s predicted distributions and the (one-hot) ground-truth distributions.

Convexity

The cross-entropy loss for logistic-regression is strictly convex in . This is the property that makes optimisation tractable: there is a single global minimum, and any reasonable iterative method (gradient descent, newton-raphson-method) will find it.

This convexity does not generalise to deeper models. A neural network with cross-entropy loss is non-convex in its parameters because the network’s output is a non-convex function of its weights — the loss itself is still convex in the output, but composing with the network breaks convexity.

Gradient

The gradient of the cross-entropy loss with respect to , when , is remarkably clean:

That is, the prediction error multiplied by the input vector, summed over the training set. The cleanness comes from a cancellation between the derivative of and the derivative of the sigmoid: the chain rule produces , which exactly cancels the terms, leaving the residual.

Related

- maximum likelihood estimation — cross-entropy is the negative log-likelihood

- logistic-regression — cross-entropy’s primary user in this module

- gradient descent — the natural minimiser

- convex-function — the property that makes cross-entropy easy to optimise

Active Recall

For a single training example with and predicted , what does the example contribute to the loss? What about with ?

Both contribute — the maximum possible loss when the model is at chance level. This is the “natural” reference point: a model that always predicts gets a per-example loss of .

Why does the loss go to infinity when the model predicts for an example with ?

When , the loss term is . As , . Information-theoretically: the model claimed “this outcome is impossible,” but it happened. Any finite penalty would be an under-statement of how badly that prediction misrepresented reality.

Show that the gradient of the per-example loss with respect to simplifies to , given .

Let so . By the chain rule, . The derivative of with respect to is . Multiplying by , the factors cancel, leaving .