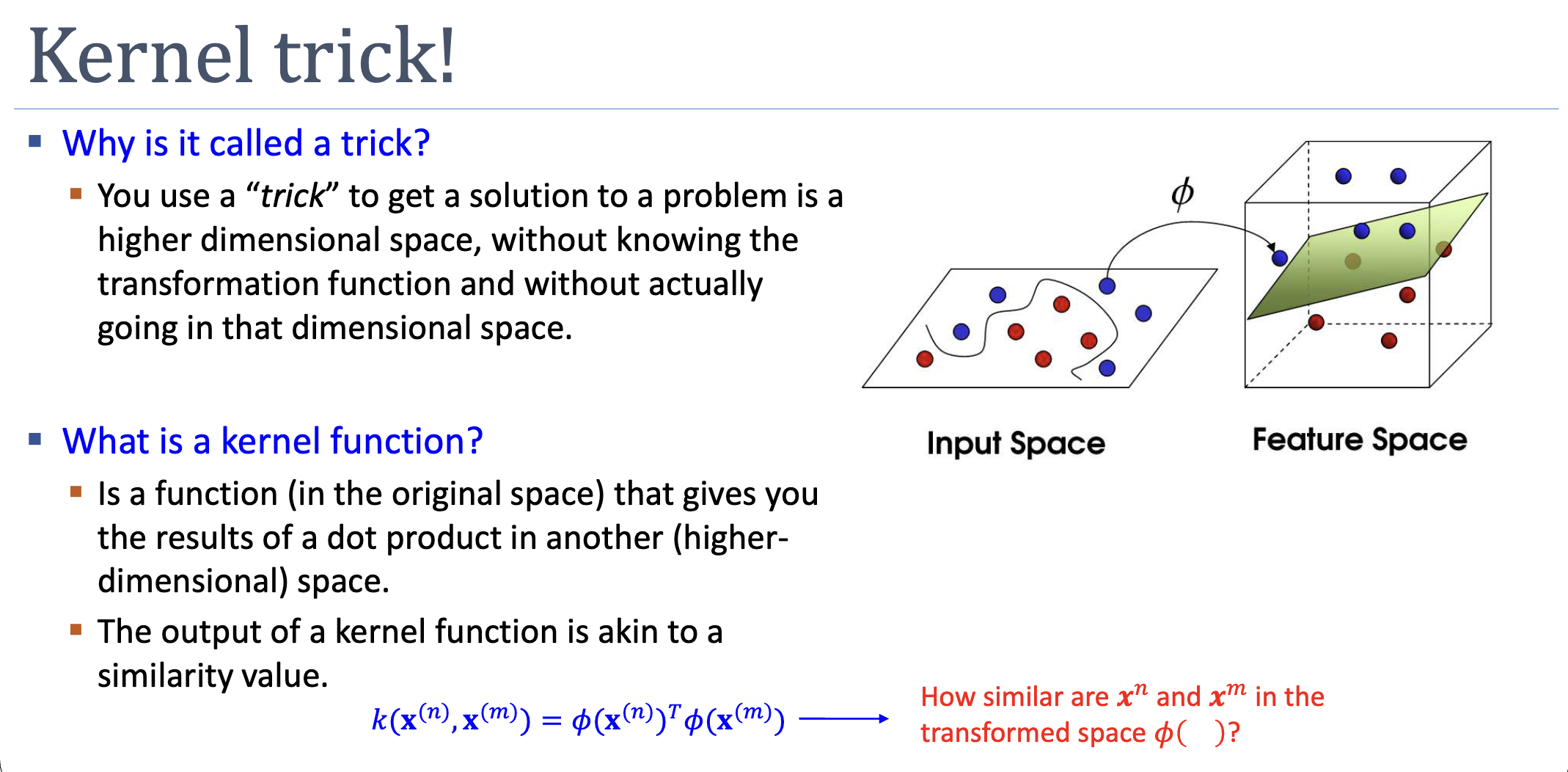

A “trick” because we get the benefit of working in a high-dimensional embedding — sometimes infinite-dimensional — without ever computing it. Whenever an algorithm depends on data only through inner products , we can replace those inner products with a kernel function that returns the same value computed entirely in the original space.

What is a Kernel Function?

A kernel function takes two points in the original input space and returns a real number:

It is the inner product of the two points after they’ve been mapped into some feature space . Conceptually, measures similarity — how aligned the two vectors are in the embedded space.

The trick: we don’t have to compute to evaluate . For many useful , there’s a closed-form expression for that uses only the original-space coordinates.

Worked Example: Polynomial Kernel of Degree 2

Take and the embedding:

(The factors are chosen to simplify the algebra below.) Computing the inner product explicitly:

This factors as . So:

Two operations in the original space (one inner product, one square) replace six multiplications in the 6-dimensional feature space. As the polynomial degree grows, the saving compounds.

Why the Dual Lets Us Use Kernels

Recall the SVM dual:

The data appears only through pairwise inner products . Replacing each with makes the entire optimisation independent of :

Same story for prediction:

where is the set of support-vector indices. Train and predict, all without forming once.

Kernel Functions Without an Explicit

The deeper insight: we don’t need to design first. We can write down a similarity function directly, as long as it corresponds to some valid inner product in some feature space. The condition that decides which functions qualify is Mercer’s condition (see mercers-condition).

This unlocks two things:

- Infinite-dimensional embeddings: the RBF kernel corresponds to an infinite-dimensional , which we’d never be able to compute explicitly.

- Non-numeric data: kernels can be defined directly on strings, trees, graphs, sets — anything for which a similarity function exists. The “feature space” is implicit, never realised.

When the Kernel Trick Pays Off

In the primal, computation per training example is . In the dual with a kernel, it’s per kernel evaluation, times pairs. Dual is preferred when:

- (high-dimensional or infinite embedding, modest training set), or

- is implicit (no closed form), or

- the input space is non-numeric.

When is small relative to (e.g., low-degree polynomial, large dataset), the primal can actually be cheaper.

Active Recall

The Gaussian kernel corresponds to an infinite-dimensional embedding. Why is this not a computational disaster?

Because we never compute the embedding. The kernel is evaluated entirely in the original -space — one subtraction, one squared norm, one exponential. The infinite dimensionality is structural (the Taylor series of contains arbitrarily high-order monomials), but invisible to the algorithm. We get the expressive power of an infinite feature space at the cost of evaluating a single 2-argument function.

Can any function I think captures "similarity" be used as a kernel?

No. The function must correspond to an inner product in some embedding — equivalently, the Gram matrix must be symmetric and positive semidefinite for any finite set of inputs (Mercer’s condition). Otherwise the dual SVM optimisation may be ill-posed (non-convex), produce nonsense predictions, or break the geometric guarantees. In practice we either construct from validated building blocks (linear, polynomial, Gaussian) using composition rules, or verify Mercer’s condition directly.

The dual SVM's prediction is . Why does the prediction time depend on the number of support vectors, not the number of training examples?

Because of complementary slackness: at the optimum, for all non-support-vectors. Their contribution to the sum vanishes regardless of . Storing only the support vectors (with their and ) is sufficient to make any future prediction. This is a key efficiency: a typical SVM trained on points might use only or fewer support vectors, making prediction dramatically faster than re-evaluating against the full training set.

Related

- lagrangian — duality is what produces a kernel-friendly optimisation

- mercers-condition — when a candidate function is a valid kernel

- gaussian-kernel — most popular kernel; infinite-dimensional embedding

- polynomial-kernel — generalises the worked example above

- support-vector-machine — the canonical algorithm where the trick lives